Signal over noise

A new framework for funding science in the 21st century

I’ve previously written about how the current administrative structures that support scientific progress in the US evolved, and why the current moment presents a real opportunity for positive evolution. But what does that change look like? How do we re-imagine the system for allocating funds in a way that simultaneously supports innovation and strengthens our ability to manage scarce resources? The only real way to answer these questions is to apply the tools of science, just as we would to solve any empirical problem. What has emerged from that effort is growing evidence that we can use large-scale scientific behavior as a lens for determining which topics are, or are not, worth pursuing. To see how that would work in practice, let’s start with a quick overview of the prevailing model for choosing which ideas receive support in the form of funding.

The journey of a scientific advance currently begins with an individual scientist requesting support for a particular idea. That request, in the form of a grant application, is sent to a panel of experts who give their opinions on its relative merits. Although review panels are commonly composed of two dozen or more scientists, in practice just two or three individuals typically determine the fate of any given proposal. This system was developed when science was much smaller, but both habit and necessity have conserved its form to a remarkable extent over many decades. The wisdom in soliciting expert opinion before making a consequential decision is clear, and convening a panel has historically been the way to do it. However, different experts may have different, equally valid perspectives on a proposal that are rooted in their individual experiences and expertise. In a resource-constrained environment those differences can generate enough noise to overwhelm any signal that flags a proposed line of inquiry as likely to produce important results [1-4]. We might attempt to improve the signal-to-noise ratio by asking for more opinions, but this is logistically unrealistic due to both practical constraints on the size of review meetings and the need to arrive at a verdict in a timely fashion.

In their classic work on the sociology of science, Latour and Woolgar establish that scientists are motivated to take up or abandon a research problem based in part on their belief that its solution will represent a major advance [5]. The movement of practicing scientists towards or away from a specific area of research, then, provides an alternative means of assessing expert opinion that can be implemented at scale. The system for organizing scholarly communications described in Davis et al. [6] is easily adapted to this purpose. The borders of a research topic are defined by the networks generated by subject matter experts when pairs of papers are cited together; growth and stagnation are simply measured by the percentage of new papers that fall within those borders. The end result is that instead of being limited to asking what a handful of scientists think, we can observe what thousands actually do. Although still not infallible, this information can meaningfully guide and inform the judgment of decision-makers.

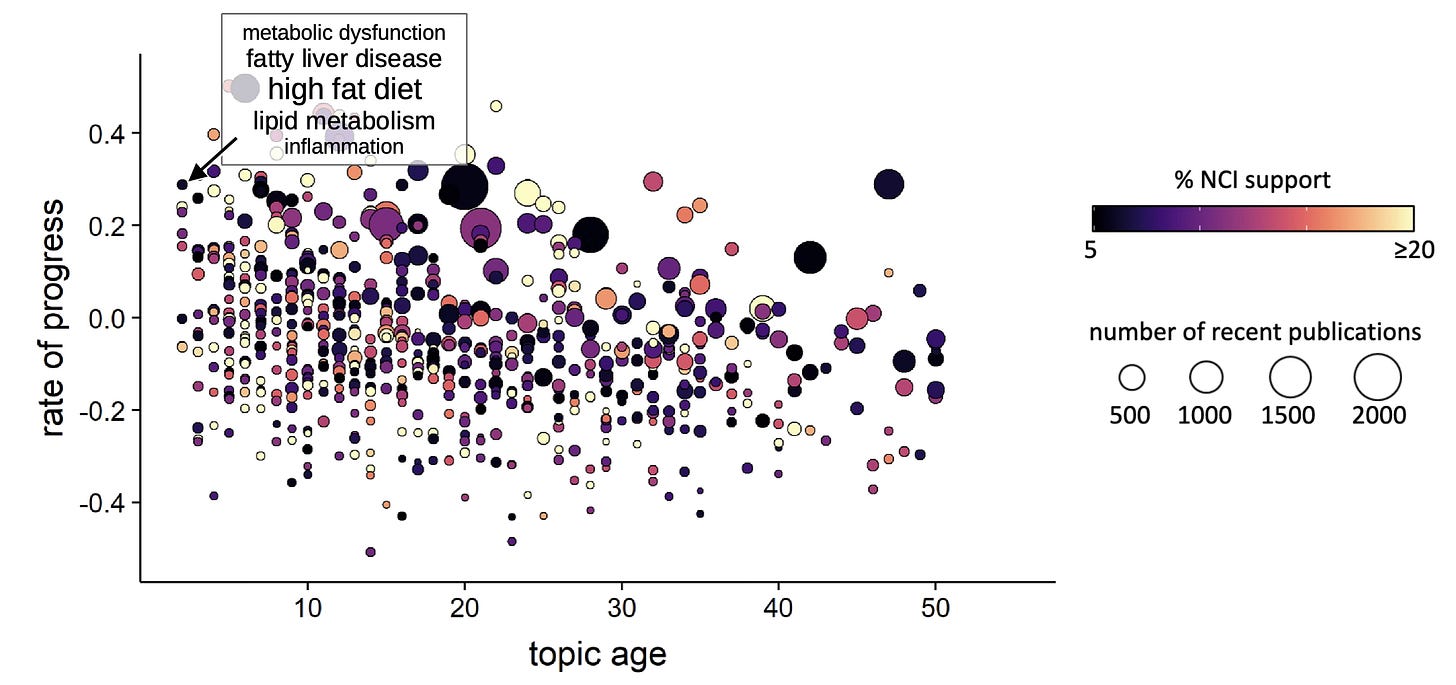

One obvious way to operationalize this concept would be for funders to concentrate their grantmaking on topics that are predicted to produce future breakthroughs [6]. However, breakthroughs are rare, and for the scientific enterprise to remain healthy, research investment must span a broad range of topics. Since our approach to predicting breakthroughs begins by systematically and agnostically identifying all topics, it is straightforward to collect the subset that correspond to any particular problem – something as broad as “cancer” or as specific as hemochromatosis – and quantify the novelty (measured as age since first appearance), relative growth, and existing level of organizational investment for each (Fig. 1).

Figure 1. Cancer-relevant research topics, isolated from the co-citation network of all papers in PubMed by regularized Markov clustering. Each bubble represents a unique scientific topic (n = 687). Rate of progress integrates information on the number and field-normalized influence of papers on a given topic, both features of the breakthrough signal described by Davis et al. [6]. Age indicates the earliest detectable appearance of the topic depicted. One novel and rapidly advancing topic, the role of lipid metabolism, inflammation, and a high fat diet in cancer, is highlighted. MS in prep.

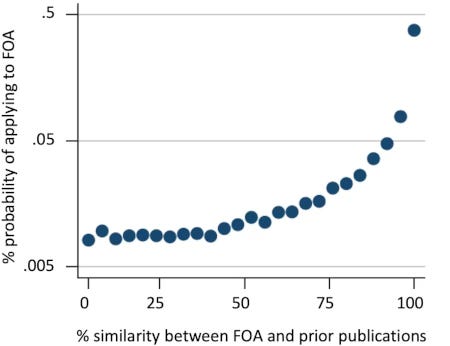

Using data to identify specific topic(s) that have only recently appeared in the literature provides a way to ensure that funders invest in genuinely new ideas rather than those its staff are merely encountering for the first time. It also acts as a bulwark against a common failure mode that plagues programmatic initiatives, specifically, the development of a targeted funding opportunity that fails to attract any applications simply because no established constituency for it exists [7]. Finally, since the likelihood of a submission increases exponentially as a funding opportunity becomes more similar to an applicant’s area of expertise [8], knowing the size of the likely applicant pool allows funders to control demand more broadly, providing a potential mechanism to reduce hypercompetition (Fig. 2).

Figure 2. Probability of application as a function of the similarity between a given funding opportunity announcement (FOA) and the applicant’s prior publications. Applications from 110,000 scientists to 390 RFAs or PAS that made use of the R01 mechanism and included set-aside funds (solicited R01s) were analyzed; similarity was evaluated based on the use of terms from the Medical Subject Headings (MeSH) thesaurus. Applications made under the American Recovery and Reinvestment Act (ARRA) of 2009 were excluded. Figure reproduced from 20 Myers [8].

It is immediately apparent that data like those shown in Figure 1 also provide a way for funders to identify topics that may already be adequately represented in a portfolio. Since funding decisions are inherently value judgments, they should never be based on computational analysis alone; decisions to reduce commitments in a specific area should only be made after further review by human authorities who can be held accountable for their choices. An area of research that appears to be making slow progress may be conducting clinical trials, contributing valuable technological development through the patent process, maintaining useful community resources, or some combination thereof. Divestment from an area should therefore be approached with caution, simply because innovative new areas of research may be born when a body of knowledge from larger, older fields is re-interpreted in light of one or more new discoveries.

Within the bounds of these caveats, we need to acknowledge that the study of some topics continues to attract funding well after the work has reached a point of diminishing returns. Discarding old ideas is an integral part of scientific progress; fields naturally lose momentum as new evidence and models supersede the old, and a reduction in the number and influence of papers on a given topic is one sign of decay [9]. We cannot expect to support new areas through budget increases alone. Indexing the reallocation of resources to the scholarly activity of the scientific community, using a carefully field-normalized metric like the Relative Citation Ratio [10], can help ensure that scarce dollars are not disproportionately spent on incremental advances. It should go without saying that any topics flagged by data, whether for deprioritization or support, should be fully disclosed to applicants up front, before they commit their time and effort to preparing and submitting applications.

A concern that commonly arises in conversations about incorporating data into decision-making is that people and resources might be funneled into areas that currently show promise at the expense of those whose potential will become apparent only at a later date; that is, we might pick the “wrong” areas at the expense of the “right” ones. This argument overlooks the fact that our current system already distributes resources unevenly across the scientific landscape based on an imperfect ability to predict the future [11]. It also assumes that the scientific ecosystem is perfectly elastic, which it is not. Changing fields is not frictionless; the entry of researchers into a new area is constrained by their training and expertise. Additionally, since the scientific focus of many researchers and donors reflects a personal connection to a given problem, they will maintain a constant focus on it regardless of how distant a solution might seem.

The true risk, as Vannevar Bush wrote, comes from the daft belief that if one does nothing one does not make mistakes [12]. Our fear that any change we make may be for the worse has left us unable to substantially reform decision-making processes that we know are flawed. Data isn’t a perfect panacea for all the problems of the research enterprise, but including it in our funding deliberations does not mean dismantling peer review or surrendering consequential choices to an algorithm. It simply means providing people already involved in decisions with an orthogonal way of checking some of their assumptions. Would that lead to better outcomes? The only way to find out is to try it and see.

References

[1] D. Kaplan, N. Lacetera, and C. Kaplan, “Sample Size and Precision in NIH Peer Review,” PLOS ONE, vol. 3, no. 7, p. e2761, Jul. 2008, doi: 10.1371/journal.pone.0002761.

[2] E. L. Pier et al., “Low agreement among reviewers evaluating the same NIH grant applications,” Proc. Natl. Acad. Sci., vol. 115, no. 12, pp. 2952–2957, Mar. 2018, doi: 10.1073/pnas.1714379115.

[3] P. S. Forscher, M. Brauer, F. Azevedo, W. T. L. Cox, and P. G. Devine, “How many reviewers are required to obtain reliable evaluations of NIH R01 grant proposals?.,” 2019. doi: https://doi.org/10.31234/osf.io/483zj.

[4] V. E. Johnson, “Statistical analysis of the National Institutes of Health peer review system,” Proc. Natl. Acad. Sci., vol. 105, no. 32, pp. 11076–11080, Aug. 2008, doi: 10.1073/pnas.0804538105.

[5] B. Latour and S. Woolgar, Laboratory Life: The Construction of Scientific Facts. Princeton University Press, 1986.

[6] M. T. Davis et al., “Prediction of transformative breakthroughs in biomedical research,” 2025. doi: https://doi.org/10.64898/2025.12.16.694385.

[7] J. Lorsch, “NIH’s Path to a Simpler Funding Opportunity Landscape.” [Online]. Available: https://web.archive.org/web/20260330013627/https://grants.nih.gov/news-events/nih-extramural-nexus-news/2026/03/nihs-path-to-a-simpler-funding-opportunity-landscape

[8] K. Myers, “The Elasticity of Science,” Am. Econ. J. Appl. Econ., vol. 12, no. 4, pp. 103–34, 2020, doi: 10.1257/app.20180518.

[9] C. K. Singh, E. Barme, R. Ward, L. Tupikina, and M. Santolini, “Quantifying the rise and fall of scientific fields,” PLOS ONE, vol. 17, no. 6, p. e0270131, Jun. 2022, doi: 10.1371/journal.pone.0270131.

[10] B. I. Hutchins, X. Yuan, J. M. Anderson, and G. M. Santangelo, “Relative Citation Ratio (RCR): A New Metric That Uses Citation Rates to Measure Influence at the Article Level,” PLOS Biol., vol. 14, no. 9, p. e1002541, Sep. 2016, doi: 10.1371/journal.pbio.1002541.

[11] T. A. Hoppe et al., “Topic choice contributes to the lower rate of NIH awards to African-American/black scientists,”Sci. Adv., vol. 5, no. 10, p. eaaw7238, doi: 10.1126/sciadv.aaw7238.

[12] V. Bush, Pieces of the Action. Stripe Press, 2022.